|

3/14/2023 0 Comments Residual sum of squares calculatorLabs supporting Ukrainian Scientists is an expansive list of labs and PIs offering support at this time.Science for Ukraine provides an overview of labs offering a place for researchers and students who are affected to work from, as well as offers of employment, funding, and accommodation:.Personally, I have found the messages of support from scientists everywhere to be truly heartfelt, and I would like to highlight some of the community initiatives I’ve seen here: R2 = 1 - np.sum((yhat - y)**2) / np.sum((y - np.We also want to use our platform to highlight the response from the scientific community. Python : Calculate Adjusted R-Squared and R-Squared In this case, adjusted r-squared value is 0.4616242 assuming we have 3 predictors and 10 observations. Let's assume you have three independent variables in this case.Īdj.r.squared = 1 - (1 - R.squared) * ((n - 1)/(n-p-1)) Print(R.squared) Final Result : R-Squared = 0.6410828 Well learn how to calculate the sum of squares in a minute. In this example, y refers to the observed dependent variable and yhat refers to the predicted dependent variable. The Error Mean Sum of Squares, denoted MSE, is calculated by dividing the Sum of Squares. In the script below, we have created a sample of these values. Suppose you have actual and predicted dependent variable values. R : Calculate R-Squared and Adjusted R-Squared Adjusted R-square should be used while selecting important predictors (independent variables) for the regression model. It means third variable is insignificant to the model.Īdjusted R-square should be used to compare models with different numbers of independent variables. Whereas r-squared increases when we included third variable. It declines when third variable is added. Hi is there a formula on excel to work out residual sum of squares of the data or another way to work it out for my data because i have 3 lots of Y values and the mean of the Y values and not sure how you work out. In the table below, adjusted r-squared is maximum when we included two variables. Whereas Adjusted R-squared increases only when independent variable is significant and affects dependent variable. Every time you add a independent variable to a model, the R-squared increases, even if the independent variable is insignificant.In the above equation, df t is the degrees of freedom n– 1 of the estimate of the population variance of the dependent variable, and df e is the degrees of freedom n – p – 1 of the estimate of the underlying population error variance.Īdjusted R-squared value can be calculated based on value of r-squared, number of independent variables (predictors), total sample size.ĭifference between R-square and Adjusted R-square

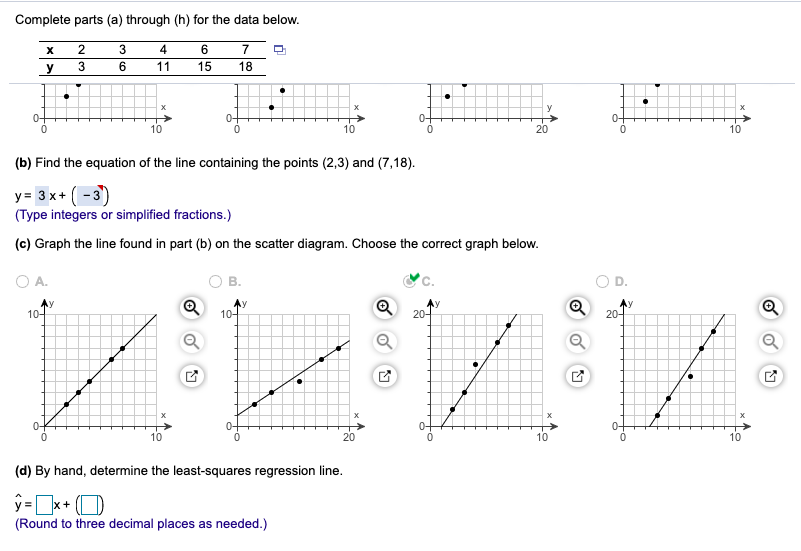

What does This Residual Calculator do What this residual calculator will do is to take the data you have provided for X and Y and it will calculate the linear regression model, step-by-step. The only difference between R-square and Adjusted R-square equation is degree of freedom. Indeed, the idea behind least squares linear regression is to find the regression parameters based on those who will minimize the sum of squared residuals. It penalizes you for adding independent variable that do not help in predicting the dependent variable.Īdjusted R-Squared can be calculated mathematically in terms of sum of squares. It measures the proportion of variation explained by only those independent variables that really help in explaining the dependent variable. Higher R-squared value, better the model. And a value of 0% measures zero predictive power of the model.

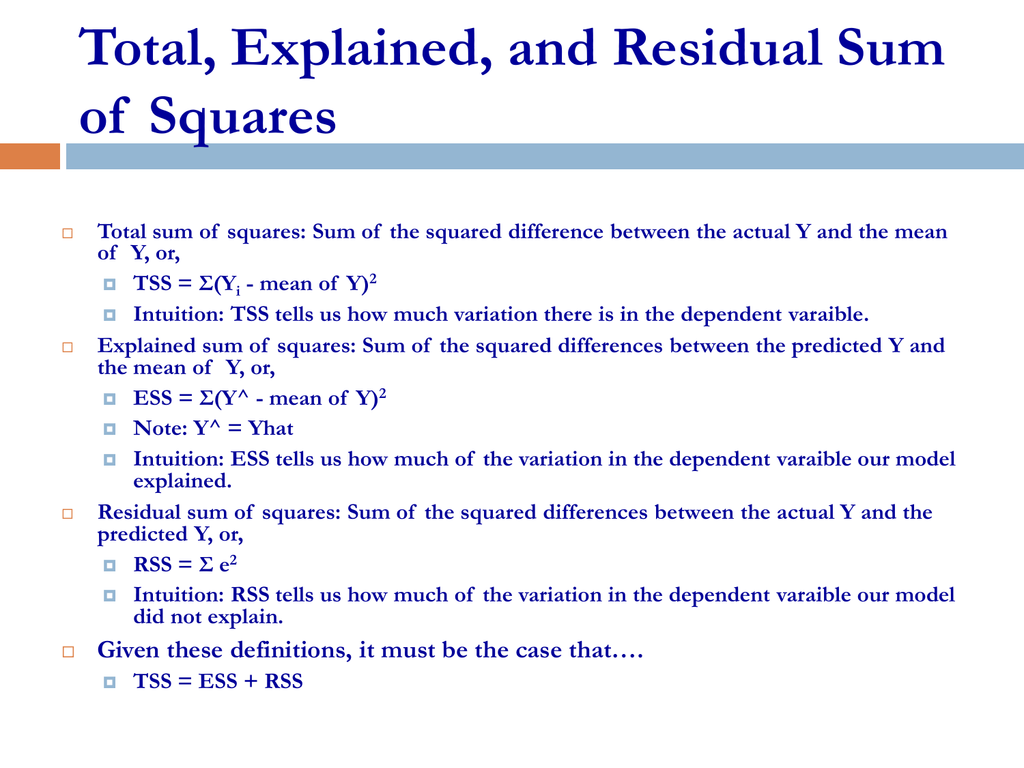

A r-squared value of 100% means the model explains all the variation of the target variable. R-Squared is also called coefficient of determination. SSreg measures explained variation and SSres measures unexplained variation.Īs SSres + SSreg = SStot, R² = Explained variation / Total Variation In this case, SStot measures total variation.

Mathematically, R-squared is calculated by dividing sum of squares of residuals ( SSres) by total sum of squares ( SStot) and then subtract it from 1. In other words, some variables do not contribute in predicting target variable. In reality, some independent variables (predictors) don't help to explain dependent (target) variable. It assumes that every independent variable in the model helps to explain variation in the dependent variable. It measures the proportion of the variation in your dependent variable explained by all of your independent variables in the model. It includes detailed theoretical and practical explanation of these two statistical metrics in R. In this tutorial, we will cover the difference between r-squared and adjusted r-squared.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed